Business-Continuity Planning with Limited Resources

Millions of office dwellers sit down at their desks every morning and assume that their computers will work, and that no information or data is lost from the day before.

Most people don’t ever think: “What if?” But, we live in a world affected by severe weather and human acts, which can cause havoc and knock out power and communications systems, and stop company operations (for a short time, or for an extended period). The vast majority of companies can’t live without efficient, reliable, 24/7 technology infrastructures and mission-critical data centers that enable their organizations to operate.

In the world of chief operating officers, heads of facilities, and IT managers, making sure these systems aren’t out of commission for even 1 millisecond is challenging. Most importantly, it’s good business to have systems operate continuously and efficiently, and at the least-cost energy. In the case of public companies trading or holding other peoples’ money, business continuity is a requirement of the U.S. Securities and Exchange Commission, the National Association of Securities Dealers, and the U.S. Department of the Treasury.

The Importance of Green Data Centers

Business-continuity data centers face the impact of the high costs of energy and wasted kilowatts of power due to poor computer utilization, inefficient design and distribution, unused or legacy applications, and software/hardware adjacencies. Over the past 5 years, while the energy expenses associated with operating these facilities have soared by more than 100 percent, firms have been forced to do “as much with less.”

To meet this challenge, data centers must include more creative designs and operations incorporating simple, data-efficient technologies, such as thermal storage, spot cooling, variable speed-drive pumps, and ambient free cooling, to cut overall energy costs. While green solutions can be financially challenging, many are viable and deployable.

Collectively, we see the warming of the data center increasing by 5 to 10 degrees F. over the next few years. Some manufacturers assert that every degree over specification decreases the life of new equipment by a year. Swift advances in technology will mean that upgrades will lead to equipment replacement well before current hardware fails.

Adding a generator, a module, or a chiller, and adding to the budget – vendor-serving solutions that many data center users subscribe to – is like putting ice cubes in an oven. The new, more sensible strategy is to reduce the waste product of energy, which is heat. One way is by utilizing more efficient chips that work without firing on all modules at once. They run cooler and save energy.

Energy limitations are real, contributing to the argument for adding remote, asynchronous data centers. Demand for energy is outpacing supply with the decommissioning of fossil fuel and nuclear power plants, and is easily outpacing eco-friendly renewable or low-carbon sources. Decentralized energy solutions – cogeneration, hydrogen, geothermal, methane, etc. – will be attractive options. There’s also value in locating data centers in geographic locations that would enhance the total cost of ownership and satisfy other reliability considerations.

Business-continuity plans (BCPs) involve people, sophisticated technology, equipment and back-up equipment, transmission systems (hard-wired and wireless), power and back-up power, and brainpower.

Business-Continuity Planning is Good Business

As part of protecting the global and U.S. economies, the U.S. Congress passed specialty legislation regulating all industries (some more than others). The Sarbanes-Oxley Act (the Public Company Accounting Reform and Investor Protection Act of 2002), HIPAA (Health Insurance Portability & Accountability Act), SEC Rules 3510 and 3520 governing BCPs, the Patriot Act, and other legislation provide a minimum of guidelines to follow. Under the legislation, a company must have a business-continuity plan (to preserve data), document the plan, and test the plan.

Data points for the sources of interruption, duration, average time to repair, mean time between failures, and the business impact in dollars, as well as the devaluing of a company’s brand, are no longer scarce. Developing a database on “Acts of God” and human intervention, along with a leveling matrix of synchronous and asynchronous distances to a primary data center, is critical. These components lend significant meaning, and must be factored into the process.

People, Advanced Technology, and Brainpower

Once you’ve established your data points, some of the vital ingredients in a BCP are:

- Establishing priorities, protocols, and personnel who are able and willing to execute tasks in the plan.

- Determining your most durable method(s) of communication: phones, radios, servers, WAN, LAN, satellite, RF, or free-space optics (FSO)?

- Executing risk mitigation of data on in-flight trades, records storage, evacuation, etc.

- Establishing remote data-recovery protocol.

- Communicating to clients the status of business and anticipated duration of consequences.

- Focusing on core business and brand-protection protocols. (In emergencies, non-critical personnel should stay at home for further direction.)

- Communicating the status of event and management expectations to staff. Give real goals.

- Developing living, travel, and sustenance plans for events.

- Setting reasonable goals to recover data and business assets on an hour-by-hour, day-by-day, and week-by-week basis.

- Distributing electronic and hard copies of the plan both on-site and to homes.

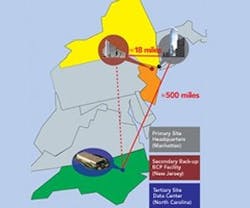

The critical hardware in ensuring an effective BCP are data centers (primary, secondary, and tertiary) and the infrastructure that connects them. Data centers should be designed and sited to operate synchronously (i.e. they transmit data simultaneously, thereby losing no data if there‘s an interruption).

Prior to 9/11, plenty of back-up data centers were usually unoccupied until an incident occurred. But, today’s thinking is that secondary and tertiary centers are fully operational and serving a purpose 24/7, synchronously or asynchronously.

“Total Cost of Ownership” Provides a Game Plan

Data centers in major cities usually occupy a few thousand square feet or a few floors in a high-rise building. Outside of urban centers, they’re low-rise “boxes.” In the “one-size-does-not-fit-all” world of data-center design and implementation, your company’s data centers should be right-sized to fit your needs, but also to operate as part of an integrated system. Data centers are expensive to build and operate. To get the biggest bang for the buck, and not overbuild, apply total cost of ownership (TCO) analysis.

TCO incorporates:

- Real estate acquisition.

- Local construction costs and labor rates.

- Local IT labor rates.

- 20-year real estate taxes (city/state).

- 20-year personal property taxes.

- Utility rates.

- Utility capital expenditures for unique improvements.

- Telecomm capital expenditures for unique improvements.

- Corporate or company tax.

- Personal income tax.

- Sales tax on IT personal property.

- Sales tax on utility usage.

- Sales tax on telco usage.

TCO is often the driver by which cities and states get short-listed in a location search and are engaged in the competition for the user.

Another important factor in data-center siting is the human component necessary to operate and maintain the facility. Generally, valued employees and key vendors prefer to be located in and near the major urban markets where primary data centers are located – often near an organization’s headquarters. To cover your secondary and tertiary locations, the most compelling model requires some key personnel and vendors to move to strategic locations that may not be near urban centers.

The Future of Data Centers

Remote data manipulation and storage have never been better, faster, or deeper. Virtualization for most applications is now extremely reliable, and cloud-computing remote processing is realistic for asynchronous, low-cost, strategically located assets. Data triangulation between on-site, near-urban, and remote data centers is the key to an effective topology.

Asynchronous data centers will be located in certain regions of the world, where the geographic location would enhance the total cost of ownership and satisfy other reliability considerations. Users will look favorably to countries with geothermal energy.

Look for smaller, synchronous data centers near urban environments to house only critical applications due to high energy and maintenance costs in those locations. These data centers will be reasonably close to primary functions to utilize high-speed telecommunications fiber-optic transmission conduit that executes at the speed of business. Look for larger, asynchronous data centers to support cloud computing, critical application functions, and storage in non-urban, low-cost areas of the world.

Ronald H. Bowman Jr. is executive vice president at New York City-based Tishman Technologies Corp..